Product

May 11, 2026

How to evaluate the People Data your agent retrieves

Antonio Mallia

How freshness, coverage, diversity, and speed determine your agent's outcomes. A retrieval-layer rubric for founders building in the people-data space.

Most teams building AI agents are competing on a layer that's about to commoditize. The reasoning, the prompts, the orchestration: matchable. What stays different is the retrieval layer underneath, and most founders haven't built a vocabulary for evaluating it yet. This post is a four-dimension rubric for doing that honestly. We build retrieval infrastructure for people-data agents, and we wrote this to help you evaluate yours.

Where polished agents break

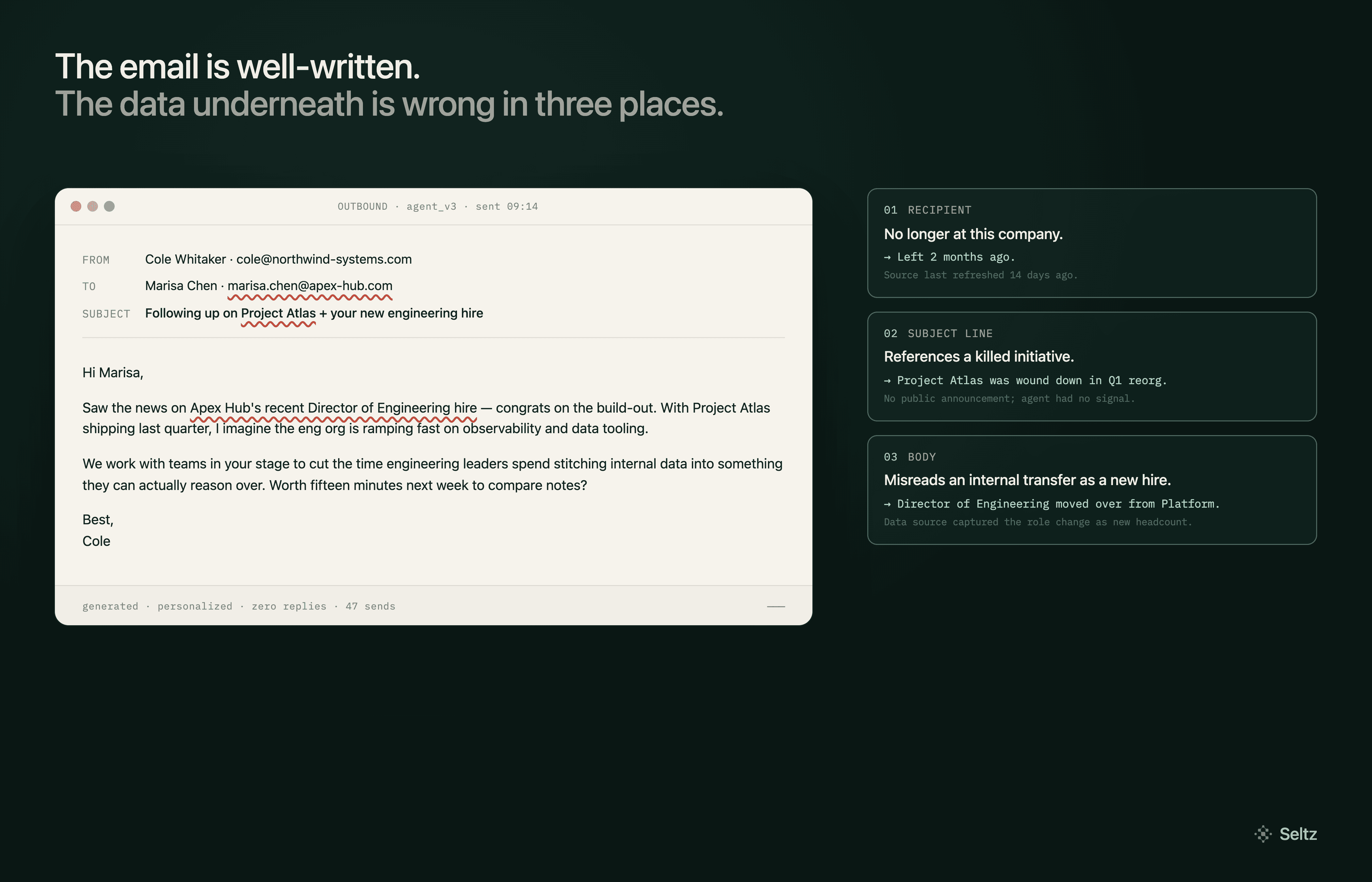

A sales agent sends a personalized email. The subject line references a product the prospect's company launched last quarter. The opening paragraph names a job title, Director of Engineering, and a recent hire the agent interpreted as a buying signal. The email is well-written. The rationale is clear. The reply rate, predictably, is zero. Why?

The prospect left the company two months ago. The product the agent referenced was killed in a reorg. The "recent hire" was an internal transfer the underlying data captured as a new role. The agent did exactly what its data sources said to do.

This is the failure mode every founder building an agent eventually confronts. The application layer can be excellent (clean reasoning, sharp prompts, smart orchestration) and still ship outcomes like this, because the data underneath isn't current, isn't deep enough, or isn't structured for what the agent needs to do with it.

You've probably read a dozen posts in the last year on what makes a moat in the AI era: workflow depth, brand, distribution, proprietary data loops. Most of it is right. What's missing is what it means specifically for agents.

For agent-native products, the choice of people-data infrastructure is an architecture decision. And in this category, most are building on a layer they don't control.

I'm writing this as a founder building the retrieval layer underneath some of these agents, so I'm biased. From what I've seen, both the quality of the people-data underneath and the retrieval layer built on top of it make or break the user experience.

We hope this framework can help you evaluate your current stack.

What good people data looks like in production

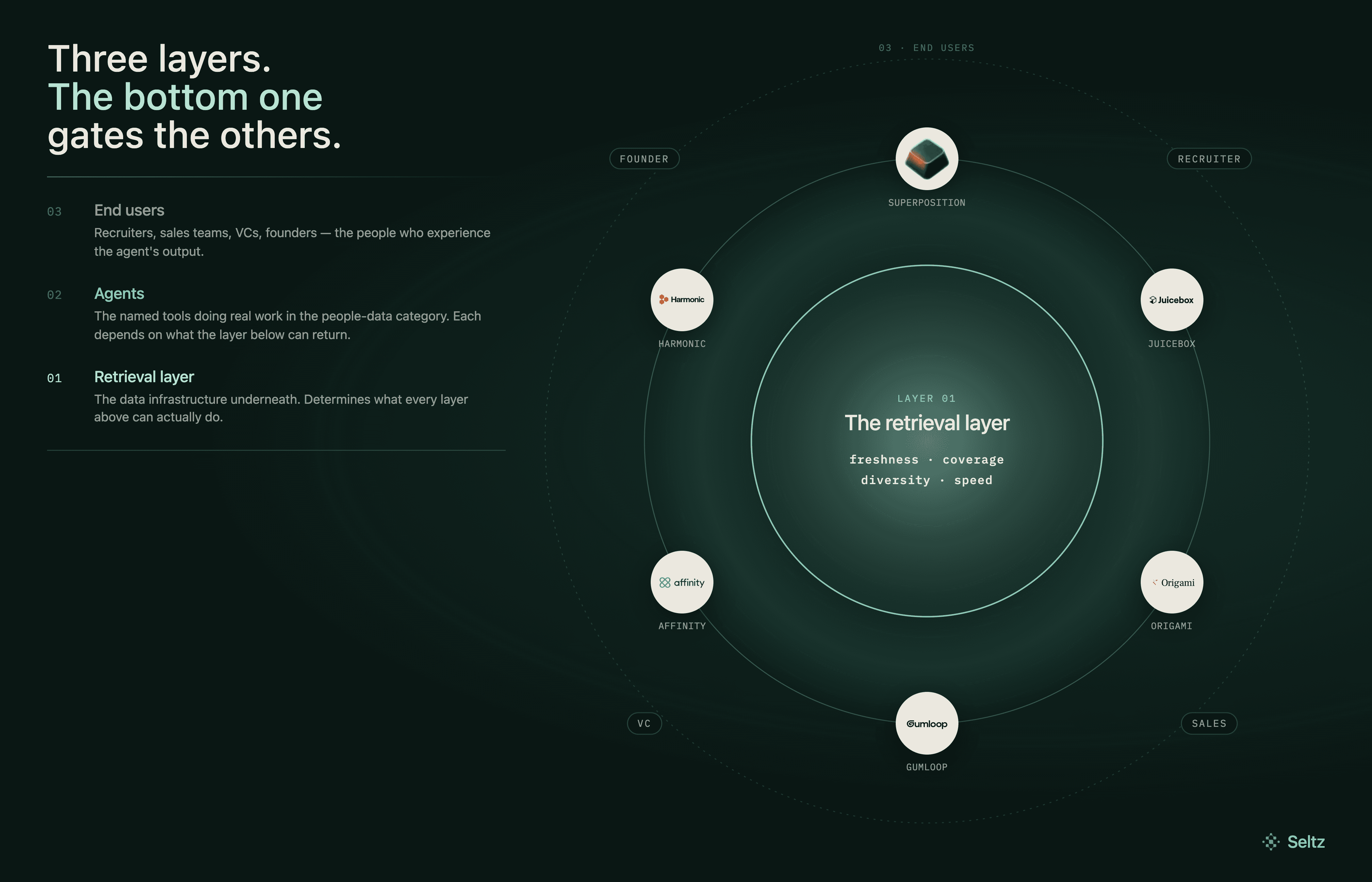

Look at what's shipping in this category right now. The agents winning attention are doing real work for real customers, and what makes them work is rarely the application layer alone.

Recruiting agents. Superposition runs voice-driven sourcing for technical hires: a founder describes the role, the agent comes back with curated matches. Juicebox built PeopleGPT, which lets recruiters describe the candidate they want in plain English and turns that into a live candidate population. Both are doing something real: they replace the calibration loop that used to take a recruiter weeks of back-and-forth with a hiring manager.

The user experience is excellent. It's also entirely a function of the people data underneath. The agent's reasoning is only as good as the candidates it can reach, and "reach" here means the breadth of the underlying profile data, the freshness of job titles, and whether someone who changed roles three weeks ago shows up correctly.

Sales and lead-research agents. Origami sends agents into the open web to find leads no static database surfaces; the founders' own framing is that 99% of the useful signal lives outside structured data.

Gumloop is a layer above this: builders use it to compose agents that do enrichment, research, outreach, deal qualification. Both are infrastructure for agents that touch live data, and both have to confront the same reality. An agent operating on a stale snapshot will write a confident, well-personalized email to someone who left the company. An agent operating on a fresh, comprehensive view of the web won't.

VC and deal-sourcing agents. Affinity built one of the few owned people-data layers in this category. The relationship graph it captures from customers' calendars and inboxes is genuinely Affinity's, and that owned data is most of what differentiates the product. The firm and company data layered on top comes from PitchBook, Crunchbase, and Dealroom, which Affinity transparently names.

Harmonic built a startup discovery engine that has invested more in the underlying index than most peers; their bet is that depth of coverage on private companies is the moat. Both are doing what the rest of the category should be doing: thinking about which layer they own and which they don't, and being honest about it.

The pattern across all six is the same. The reasoning, the orchestration, the agent design: all important, all matchable. The data underneath determines what's possible.

Why retrieval matters as much as the data

Once you start looking at agent products this way, three things become hard to unsee.

The first is that the people data behind most agents in this category is shared infrastructure, often along with the firm data and intent data layered alongside it. Bombora's intent data powers many of the intent-marketing platforms whose customers think of them as competitors. PitchBook, Crunchbase, and Dealroom collectively sit underneath most VC and deal-sourcing products on the market, including, transparently, Affinity. LinkedIn-derived profile data flows through dozens of recruiting and sales tools that present themselves as differentiated. The application layers compete fiercely. The data underneath is, more often than not, the same.

The second is that when the application layer levels (and it is leveling) what your agent actually retrieves from that shared source becomes the variable that decides which agent ships the better outcome. Two agents drawing from the same LinkedIn-derived data can produce dramatically different results depending on how the retrieval is built. The user doesn't see what the agent is drawing on, but the user does see the email that goes to the wrong person, the candidate who turned out to have left the role, the company that turned out to have already announced the round. They experience the retrieval as the product, even though they're using the application.

The third is the part most founders haven't reckoned with yet. If your retrieval over a shared source is the same as your competitor's retrieval over the same source, your outcomes will converge. The clever orchestration, the agent design, the prompt engineering: these will be matched. The reasoning capability you're building on top of GPT-class models is the same reasoning capability your competitors will build on top of GPT-class models in three months. What stays different is what your agent actually gets back when it queries, and that's a function of the infrastructure between the source and the agent, not the source itself.

The question every founder building an agent should be asking: of the things my product depends on, which ones am I genuinely doing better than my competitors, and which ones am I just sharing with them?

How to evaluate a retrieval layer

If the retrieval layer is what determines whether your agent ships better outcomes than your competitors', the question becomes practical: how do you actually evaluate one? Most founders haven't built a vocabulary for this yet. Four dimensions matter, and they compound.

Freshness. How quickly a change at the source becomes visible to your agent. Someone updates their LinkedIn profile, accepts a new role, leaves a company, joins a new one. How long until your retrieval reflects that? Most providers in this category rebuild their indexes on weekly or monthly cycles, which means there's a window measured in weeks where your agent is operating on a stale view of the world.

For you, the founder, this is the single biggest determinant of how often your agent ships polished failures. The email to the prospect who left two months ago is a freshness failure, full stop. For the user, freshness is what separates an agent that catches the candidate before they're snapped up from one that surfaces them three weeks late. Freshness is the dimension your customers will notice first when something goes wrong, even though they won't have the vocabulary to call it that.

Coverage. How much of the underlying source the retrieval layer can actually return. Not every retrieval over LinkedIn returns the same volume of data. Some providers return truncated profiles. Some return partial fields. Some return what's cached from a crawl six months ago. The agent's reasoning ceiling is set by what shows up in the response; if the data isn't in the response, it doesn't exist as far as the agent is concerned.

For you, coverage determines what your agent can actually do. A recruiting agent that gets back partial profiles makes worse matches than one that gets full work history, education, skills, and recent activity. For the user, coverage is the difference between an agent that knows enough to be useful and one that confidently surfaces the wrong recommendation because it was missing the field that would have flagged the mismatch.

Diversity. Whether the retrieval returns a range of high-quality results or returns the same top-ranked record over and over. Most retrieval was built for human users who click the top result. Agents need different tradeoffs: when an agent runs a query, the best outcome usually comes from comparing several candidate matches rather than acting on the single highest-ranked one. A retrieval layer that ranks for clicks instead of for agent reasoning gives your agent worse raw material to work with.

For you, diversity is the dimension that determines whether your agent's reasoning has anything to actually reason over. For the user, diversity is what makes the agent feel like it considered options rather than reflexively returning the obvious answer. The agent that surfaces the candidate the algorithm wouldn't have ranked first, but who's actually the best fit, wins on this dimension.

Speed. How fast the retrieval returns results. This sounds like a perf metric and is treated like one in most evaluations. It's actually a quality multiplier.

For the user, faster retrieval means the agent feels responsive. The difference between a tool that takes three seconds to come back and one that takes thirty changes whether the user trusts the tool enough to keep using it. For you, the more important effect is what speed enables on the quality side. When a retrieval takes 200ms instead of 2 seconds, your agent has the budget to query multiple times, compare results, re-rank, and self-correct before responding to the user. Two well-built agents on the same source data will produce dramatically different results if one can afford five queries per user request and the other can only afford one. Speed isn't a perf concern; it's the dimension that determines how much reasoning your agent can do at all.

These four dimensions compound. A retrieval layer that's fresh, deep, diverse, and fast doesn't just produce better outcomes than one that scores well on two of those dimensions. It produces categorically different outcomes, because each dimension gates what the others can actually deliver. Stale retrieval over deep coverage still gives you stale answers. Diverse retrieval at high latency means your agent only gets to ask once. The dimensions multiply each other, which is why getting two of them right and skipping the others doesn't get you 50% of the value.

How to achieve people data nirvana

For a founder building an agent in this category, the four-dimension question lands as a strategic choice. Given the rubric, how do you actually get to a retrieval layer that scores well on all of them? Three honest options.

The first is to build the people-data infrastructure yourself. Own the crawling, the ingestion, the refresh architecture, the retrieval layer, the legal posture. This is the hardest path. It pulls engineering attention away from the application layer at exactly the moment when the application layer needs to be moving fast. Most agent founders are not set up to take this on, and the opportunity cost of trying isn't worth it. But for a small number of teams whose entire product depends on retrieval their competitors can't match, building is the only path that gets there.

The second is to partner with someone who has built that infrastructure. Find a provider whose architecture, refresh cadence, coverage, and retrieval design align with what your agent has to do for the user. Build the application layer on infrastructure you don't have to maintain. The trade-off is dependency on someone else's roadmap. The benefit is that you get to spend your engineering attention on the part of the product your customers actually evaluate. This is the path most agent companies will take if a people-data infrastructure provider exists that fits their use case.

The third is to keep competing at the application layer with the same retrieval over shared sources as your competitors and accept the consequences. Differentiate on UX, agent design, workflow depth, brand. This is viable in some categories. It's becoming difficult in categories where AI is flattening application-layer differentiation faster than any of us expected.

There isn't one right answer. Different agents in different categories should make different choices. The mistake is not making the choice consciously.

How we'd like to help

This is the question I've been working on at Seltz. We're building a knowledge provider for agents: a people-data layer with owned crawling, real-time refresh, and full-document retrieval, designed from the ground up for agent consumption rather than human browsing. The conversation about moats in this category has been happening one layer too high, and most of the founders I talk to are starting to feel it. The four-dimension rubric above is the one we use internally to evaluate ourselves.

If you're a founder at one of the named tools or any of the dozens of others building agents in this space, and the people data question is on your mind, the door is open. We'd happily walk through your current retrieval infrastructure and show you how Seltz scores on each of the four dimensions above. Book a demo or reach out directly. The conversation is more useful than the conclusion.

Related Articles